In this article we will install a secure Prometheus server into an EKS/Kubernetes cluster automatically using terraform. The advantage of this installation method is that it is fully repetable and every aspect of the installation is controlled by Terraform.

This prometheus server will accept connections over https and its url will be protected by basic access authentication set on the nginx ingress. The following will be provisioned by Terraform automatically:

- DNS CNAME record to your defined url.

- Free SSL certificate generated for your defined url.

- HTTPS secure access to your defined url.

- Password protection for your defined url.

- Prometheus server.

Prerequisites

In this example we will only show how to install the Prometheus Server but won’t be dealing with installing the prerequisites. The following is needed before the terraform procedure is ran:

- Nginx Ingress Controller is installed.

- Cert manager is installed.

- Your domain is set up and managed in Route 53

- EKS Cluster set up and operating

Prerequisite Configuration

All terraform files are published to github and can be found in kubernetes-prometheus-terraform repository. All configuration variables are located inside the variables.tf file.

Define your URL

First configure the settings for your URL.

variable "main_domain" {

default = "example.com"

}

variable "prometheus_hostname" {

type = string

default = "prometheus"

}Replace the following:

- main_domain your route 53 managed root domain.

- prometheus_hostname your hostname/subdomain which will be accessible externally. The default is prometheus and using the default settings the full Prometheus server url would be prometheus.example.com

Nginx ingress controller configuration

variable "nginx_namespace" {

default = "default"

}

variable "nginx_name" {

default = "nginx-ingress-controller"

}Adjust the following:

- nginx_namespace is the namespace where your nginx ingress controller is installed into.

- nginx_name is the name of the nginx ingress controller.

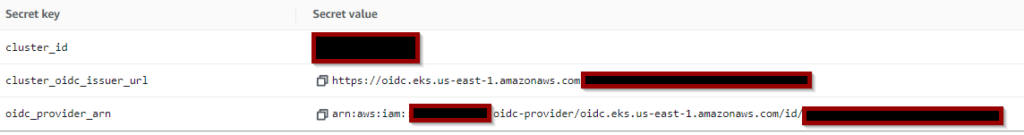

Secrets Configuration

The last step is to add your kubernetes credentials as an aws secret in the AWS Secret Manager. In this example we use the name main_kubernetes for the AWS secret and you need to configure it with the following Secret keys:

This information is available on your EKS cluster’s main page.

- cluster_id is the name of your cluster.

- cluster_oidc_issuer_url is the “OpenID Connect provider URL” featured also on the main page of your EKS cluser.

- oidc_provider_arn is located in the IAM section on your aws Console. Go to IAM -> Identity providers then click on the one with the same name as your cluster_oidc_issuer_url and copy the ARN provided in the upper right corner. Alternatively running aws iam list-open-id-connect-providers command will achieve the same.

We will need to add a kubernetes secret to secure the https url for the prometheus server. Do the following:

- Run htpasswd -c auth username replace “username” with the user you intend to use to log in to your prometheus server console. This command will ask for the password you would like to use and will save the credentials in a file called “auth”.

[root@app]# htpasswd -c auth username

New password:

Re-type new password:

Adding password for user username- Run kubectl create secret generic http-auth –from-file=auth -n prometheus this will create the kubernetes secret using the credentials previously created. If you do not have the prometheus namespace created yet ( which is the case before the first run ) you can execute this step later.

Installation

Once you have completed the configuration changes for your environment you can run the terraform code manually or using any of the propular pipeline integrations like github actions, gitlab pipelines, jenkins, etc.

Verify your installation

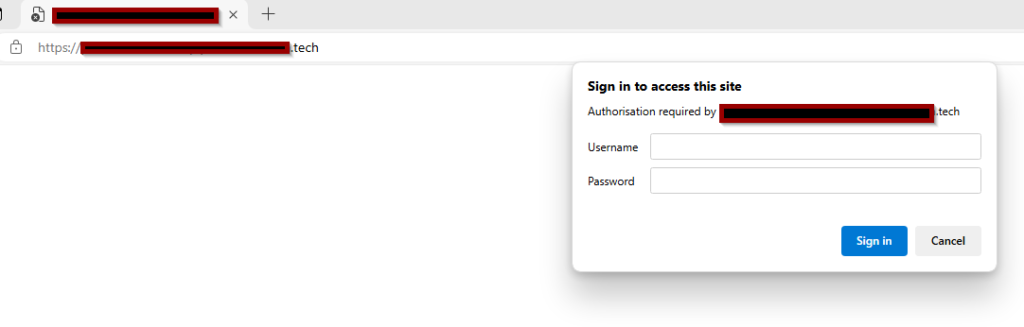

Once your terraform code successfully finished visit your url.

Enter the username and password that you have previously defined as a kuberenetes secret.

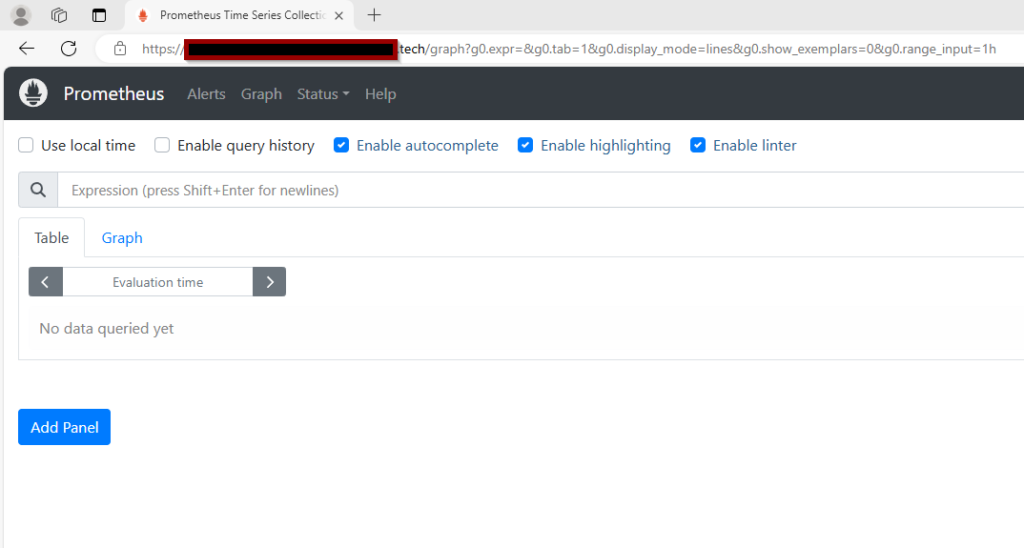

Enjoy your https and password secured Prometheus server running on EKS/Kubernetes.

Additional security options

Additonally you can set up an ip range where the url would be accessed from to further improve on security by adding the following to your terraform code. Replace 192.168.1.1/32 with your ip range or a single ip. This is useful – for example – if this prometheus instance will serve as a slave prometheus server in a federated setup.

"nginx.ingress.kubernetes.io/whitelist-source-range" = "192.168.1.1/32"The modified terraform code would look like this.

resource "kubernetes_ingress_v1" "prometheus-ingress" {

wait_for_load_balancer = true

metadata {

name = "${var.prometheus_name}-ingress"

namespace = var.prometheus_namespace

annotations = {

"cert-manager.io/cluster-issuer" = "letsencrypt-prod"

"kubernetes.io/tls-acme" = "true"

"nginx.ingress.kubernetes.io/auth-type" = "basic"

"nginx.ingress.kubernetes.io/auth-secret" = data.kubernetes_secret.prometheus-http.metadata[0].name

"nginx.ingress.kubernetes.io/auth-realm" = "Authentication Required - Prometheus"

"nginx.ingress.kubernetes.io/whitelist-source-range" = "192.168.1.1/32"

}

}

spec {

ingress_class_name = "nginx"

tls {

hosts = ["${var.prometheus_hostname}.${var.main_domain}"]

secret_name = "${var.prometheus_hostname}.${var.main_domain}.tls"

}

rule {

host = "${var.prometheus_hostname}.${var.main_domain}"

http {

path {

backend {

service {

name = kubernetes_service_v1.prometheus-service.metadata.0.name

port {

number = var.prometheus_port

}

}

}

path = "/"

}

}

}

}

}